11 Server

The server is for serving up any web services outside those of Github (e.g., website, docs and R package msens) using Docker (see the docker-compose.yml; with reverse proxying from subdomains to ports by Caddy).

The full architecture is summarized in the Software overview. This chapter documents the concrete services running on the host and how Caddy maps subdomains to container ports.

11.1 Setup

For the latest instructions on launching an Amazon instance and installing the server software, see Server Setup · MarineSensitivity/server Wiki, which is pasted below for convenience…

on AWS as EC2 instance using Docker

11.1.1 launch instance

name: msens1:

- Software Image (AMI)

Canonical, Ubuntu, 22.04 LTS, amd64 jammy image build on 2023-09-19 ami-0fc5d935ebf8bc3bc - Virtual server type (instance type)

t2.xlarge (4 vCPU, 16 GB memory)

- Firewall (security group)

New security group - Storage (volumes)

2 volume(s)- 20 GB

/server software, disposable - 60 GB

/sharefor all data, persistent and to be backed up

- 20 GB

11.1.1.1 allocate IP address

Elastic IP addresses | EC2 | us-east-1 for persistent IP address

Allocated IPv4 address:

100.25.173.0Associate Elastic IP address

11.1.2 ssh to server

pem='/Users/bbest/My Drive/private/msens_key_pair.pem'

ssh -i $pem ubuntu@msens1.marinesensitivity.org11.1.2.1 set hostname

sudo vi /etc/cloud/cloud.cfg

# preserve_hostname: true

sudo hostnamectl set-hostname msens1.marinesensitivity.org

sudo reboot11.1.2.2 mount volume

The extra volume (60 GB for /share) was added during EC2 launch instance wizard, but needs to be mounted before available for use.

df -HFilesystem Size Used Avail Use% Mounted on

/dev/root 21G 2.3G 19G 11% /

tmpfs 8.4G 0 8.4G 0% /dev/shm

tmpfs 3.4G 898k 3.4G 1% /run

tmpfs 5.3M 0 5.3M 0% /run/lock

/dev/xvda15 110M 6.4M 104M 6% /boot/efi

tmpfs 1.7G 4.1k 1.7G 1% /run/user/1000lsblkNAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

loop0 7:0 0 24.6M 1 loop /snap/amazon-ssm-agent/7528

loop1 7:1 0 55.7M 1 loop /snap/core18/2790

loop2 7:2 0 63.5M 1 loop /snap/core20/2015

loop3 7:3 0 111.9M 1 loop /snap/lxd/24322

loop4 7:4 0 40.8M 1 loop /snap/snapd/20092

xvda 202:0 0 20G 0 disk

├─xvda1 202:1 0 19.9G 0 part /

├─xvda14 202:14 0 4M 0 part

└─xvda15 202:15 0 106M 0 part /boot/efi

xvdb 202:16 0 60G 0 disksudo file -s /dev/xvdb

# /dev/xvdb: dataSo no file system on /dev/xvdb yet.

sudo mkfs -t xfs /dev/xvdb

sudo mkdir /share

sudo mount /dev/xvdb /sharesudo cp /etc/fstab /etc/fstab.orig

sudo blkid

# /dev/xvdb: UUID="bc766dfb-1c42-49cf-9320-2242a2d48a2e" BLOCK_SIZE="512" TYPE="xfs"

sudo vim /etc/fstab

# UUID=bc766dfb-1c42-49cf-9320-2242a2d48a2e /share xfs defaults,nofail 0 2

df -h

sudo umount /share ; df -h

sudo mount -a ; df -h11.1.3 install docker

Following:

sudo apt-get update

#OLD: sudo apt-get install docker.io -yNEW: [[Migrate to docker compose]]

sudo systemctl start docker

sudo docker run hello-world

sudo systemctl enable docker

docker --version

# Docker version 24.0.6, build ed223bc

sudo usermod -a -G docker $(whoami)11.1.3.1 run docker compose

- /Users/bbest/My Drive/private/

msens_server_env-password.txt

sudo chown -R ubuntu:ubuntu /share

mkdir -p /share/github/MarineSensitivity

cd /share/github/MarineSensitivity

# clone server repo

git clone https://github.com/MarineSensitivity/server.git

cd server

# add password, used as $PASSWORD in docker-compose.yml

echo 'PASSWORD=******' > .env

# launch docker instances

sudo docker-compose up -d11.2 Docker compose

The Docker compose file is used to define and run multi-container Docker applications. Here is the docker-compose.yml file for the server pasted for convenience …

version: "3.9"

services:

caddy:

container_name: caddy

build: ./caddy

ports:

- 80:80

- 443:443

restart: unless-stopped

volumes:

- ./caddy/Caddyfile:/etc/caddy/Caddyfile

- /share:/share

- /share/caddy/data:/data

- /share/caddy/config:/config

rstudio:

container_name: rstudio

build: ./rstudio

environment:

ROOT: 'true'

USER: admin

PASSWORD: ${PASSWORD}

ADD: shiny

ports:

- 8787:8787 # rstudio

- 3838:3838 # shiny

restart: unless-stopped

volumes:

- /share:/share

- /share/shiny_apps:/srv/shiny-server

plumber:

container_name: plumber

build: ./plumber

ports:

- 8888:8888 # api

restart: unless-stopped

volumes:

- /share:/share

depends_on:

- postgis

postgis:

container_name: postgis

image: postgis/postgis:latest

environment:

POSTGRES_DB: msens

POSTGRES_USER: admin

POSTGRES_PASSWORD: ${PASSWORD}

ANON_PASSWORD: ${ANON_PASSWORD}

PGDATA: /share/postgis/data

volumes:

# all files in /docker-entrypoint-initdb.dare automatically executed

# in alphabetical order on container creation

- ./postgis/init.sh:/docker-entrypoint-initdb.d/init.sh # add user anon

- /share:/share

- /share/postgis:/var/lib/postgresql

restart: unless-stopped

healthcheck:

test: 'exit 0'

ports:

- 5432:5432

pgadmin:

container_name: pgadmin

image: dpage/pgadmin4:8.14

restart: always

environment:

PGADMIN_DEFAULT_EMAIL: ben@ecoquants.com

PGADMIN_DEFAULT_PASSWORD: ${PASSWORD}

PGADMIN_LISTEN_PORT: 8088

# PGADMIN_CONFIG_CONFIG_DATABASE_URI: "'postgresql://admin:$PASSWORD@postgis:5432/msens'"

ports:

- 8088:8088

volumes:

- /share/pgadmin:/var/lib/pgadmin

depends_on:

- postgis

pgbkups:

container_name: pgbkups

image: prodrigestivill/postgres-backup-local

restart: always

user: postgres:postgres # Optional: see below

volumes:

- /share/postgis_backups:/backups

# sudo mkdir /share/postgis_backups; sudo chown -R 999:999 /share/postgis_backups

links:

- postgis

depends_on:

- postgis

environment:

- POSTGRES_HOST=postgis

- POSTGRES_DB=msens

- POSTGRES_USER=admin

- POSTGRES_PASSWORD=${PASSWORD}

- POSTGRES_EXTRA_OPTS=-Z6 --blobs

- SCHEDULE=@daily

- HEALTHCHECK_PORT=8088

- BACKUP_KEEP_DAYS=2

- BACKUP_KEEP_WEEKS=1

- BACKUP_KEEP_MONTHS=2

tile:

container_name: tile

environment:

DATABASE_URL: 'postgresql://admin:${PASSWORD}@postgis:5432/msens'

image: pramsey/pg_tileserv:latest

depends_on:

- postgis

ports:

- 7800:7800

tilecache:

container_name: tilecache

image: varnish:latest # 7.4.2 # last updated: 2023-12-26

volumes:

- /share:/share

# - "./varnish/default.vcl:/etc/varnish/default.vcl"

ports:

- 6081:6081

environment:

# VARNISH_SIZE: '2G'

VARNISH_BACKEND_HOST: tile # .marinesensitivity.org

VARNISH_BACKEND_PORT: 7800

VARNISH_HTTP_PORT: 6081 # VARNISH_PROXY_PORT: '6081'

#command: "-p default_keep=43200" # 60*60*12 = 43200 sec = 12 hrs

restart: always

depends_on:

- "tile"

# rest:

# container_name: rest

# environment:

# PGRST_DB_URI: 'postgresql://anon:${ANON_PASSWORD}@postgis:5432/msens'

# PGRST_OPENAPI_SERVER_PROXY_URI: http://127.0.0.1:3000

# PGRST_DB_ANON_ROLE: anon # db-anon-role

# image: postgrest/postgrest

# depends_on:

# - postgis

# ports:

# - "3000:3000"

# swagger:

# container_name: swagger

# image: swaggerapi/swagger-ui

# depends_on:

# - rest

# ports:

# - "8080:8080"

# expose:

# - "8080"

# environment:

# API_URL: https://rest.MarineSensitivity.org/

titiler:

container_name: titiler

build: ./titiler

environment:

PORT: 8000

# WORKERS_PER_CORE: 1

MSENS_CELLID_COG: /share/data/derived/r_cellid.tif

MSENS_DUCKDB: /share/data/big/latest/sdm.duckdb

MSENS_MAX_ROWS: "1000000"

MSENS_LRU_SIZE: "128"

volumes:

- /share:/share

ports:

- "8000:8000"

restart: always

titilecache:

container_name: titilecache

image: varnish:latest # 7.4.2 # last updated: 2023-12-26

volumes:

- /share:/share

- "./varnish/titiler.vcl:/etc/varnish/default.vcl:ro"

ports:

- 6082:6082

environment:

# VARNISH_SIZE: '2G'

VARNISH_BACKEND_HOST: titiler # .marinesensitivity.org

VARNISH_BACKEND_PORT: 8000

VARNISH_HTTP_PORT: 6082

command: "-p default_keep=604800" # 7d, matches vcl TTL

restart: always

depends_on:

- "titiler"11.3 DNS

The domain name server (DNS) records are managed by SquareSpace. The subdomains point to the server on Amazon at 100.25.173.0, whereas the main website is hosted by Github servers, per Managing a custom domain for your GitHub Pages site - GitHub Docs.

| Host | Type | Data |

|---|---|---|

| @ | A | 185.199.111.153 |

| @ | A | 185.199.110.153 |

| @ | A | 185.199.109.153 |

| @ | A | 185.199.108.153 |

| api | A | 100.25.173.0 |

| app | A | 100.25.173.0 |

| file | A | 100.25.173.0 |

| msens1 | A | 100.25.173.0 |

| pgadmin | A | 100.25.173.0 |

| pmtiles | A | 100.25.173.0 |

| rstudio | A | 100.25.173.0 |

| shiny | A | 100.25.173.0 |

| tile | A | 100.25.173.0 |

| tilecache | A | 100.25.173.0 |

| titiler | A | 100.25.173.0 |

| titilecache | A | 100.25.173.0 |

| www | CNAME | marinesensitivity.org |

11.4 Caddyfile

The Caddyfile parameterizes the reverse proxying between the external subdomains and the Docker’s internal ports. Here is the Caddyfile pasted for convenience …

# docker exec caddy caddy reload --config /etc/caddy/Caddyfile

{

order pmtiles_proxy before file_server

}

(cors) {

@origin header Origin *

header @origin {

Access-Control-Allow-Origin "*"

Access-Control-Request-Method GET

}

}

api.marinesensitivity.org {

reverse_proxy plumber:8888

}

file.marinesensitivity.org {

import cors

# raw pmtiles for client-side PMTiles protocol (add_pmtiles_source)

handle_path /pmtiles/* {

root * /share/data/derived/pmtiles

file_server browse

}

# legacy versioned pmtiles routes (backwards compat for old apps)

handle_path /pmtiles/v4/* {

root * /share/data/derived/v4/pmtiles

file_server browse

}

handle_path /pmtiles/v3/* {

root * /share/data/derived/v3/pmtiles

file_server browse

}

# force reports to download (especially HTML which otherwise renders inline)

handle_path /reports/* {

root * /share/public/reports

header Content-Disposition "attachment"

file_server

}

handle {

root * /share/public

file_server browse {

precompressed zstd br gzip

}

}

}

pgadmin.marinesensitivity.org {

reverse_proxy pgadmin:8088

}

# rest.marinesensitivity.org {

# reverse_proxy rest:3000

# }

rstudio.marinesensitivity.org {

reverse_proxy rstudio:8787

}

shiny.marinesensitivity.org {

reverse_proxy rstudio:3838

}

app.marinesensitivity.org {

reverse_proxy rstudio:3838

}

shiny.oceanmetrics.io {

reverse_proxy rstudio:3838

}

# swagger.marinesensitivity.org {

# reverse_proxy swagger:8080

# }

tile.marinesensitivity.org {

reverse_proxy tile:7800

}

tilecache.marinesensitivity.org {

reverse_proxy tilecache:6081

}

titiler.marinesensitivity.org {

reverse_proxy titiler:8000

}

titilecache.marinesensitivity.org {

reverse_proxy titilecache:6082

}

pmtiles.marinesensitivity.org {

import cors

# server-side tile decoding: ZXY + TileJSON (for QGIS, leaflet, etc.)

handle_path /tiles/* {

pmtiles_proxy {

bucket file:///share/data/derived/v4/pmtiles

# note: v3 pmtiles also available at /share/data/derived/v3/pmtiles

cache_size 256

public_url https://pmtiles.marinesensitivity.org/tiles

}

}

}

11.5 Services

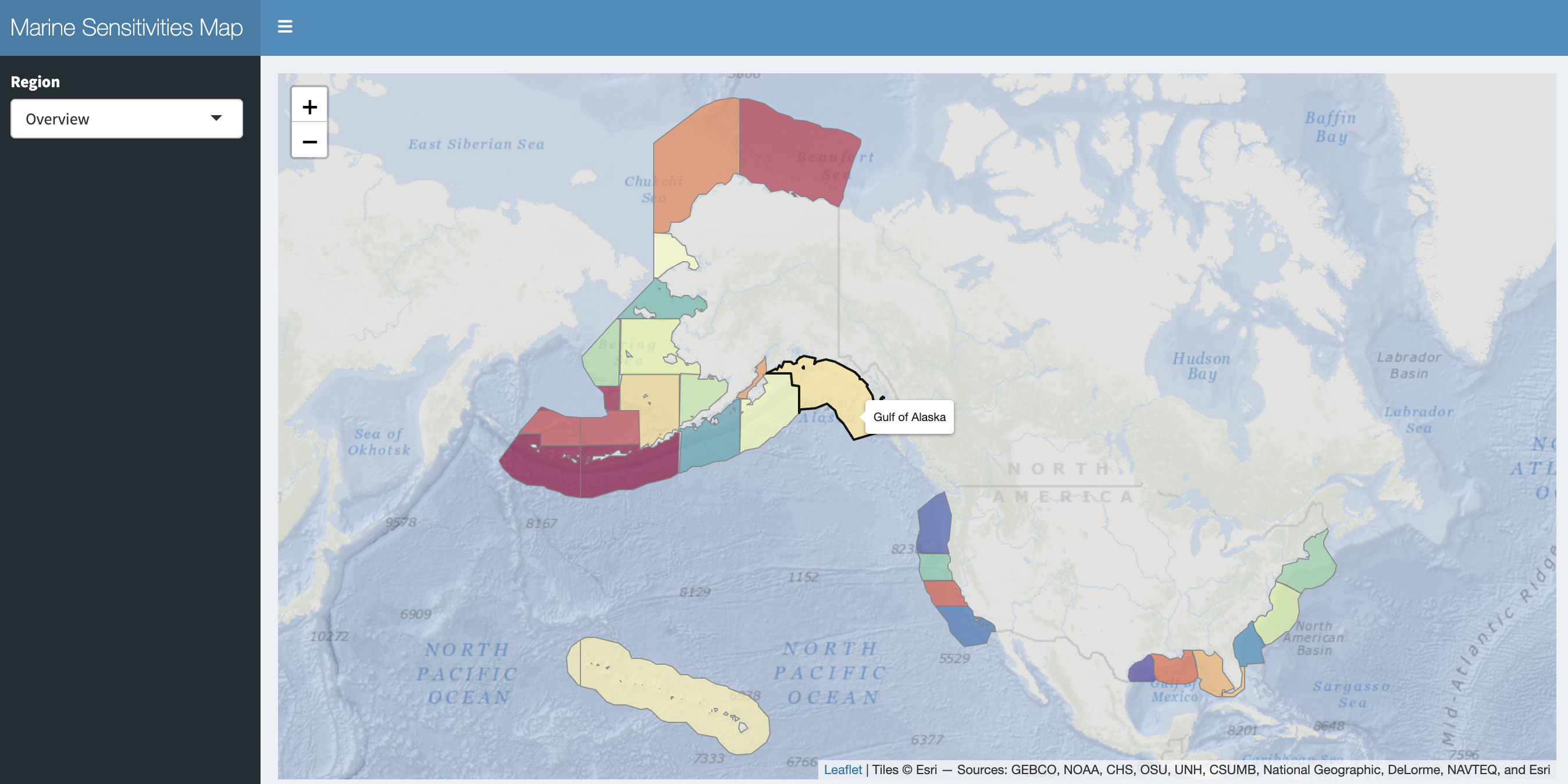

The server is running the following services. Subdomains with 🔒 are internal (admin / development); others are public.

11.5.1 App + data plane

app / shiny

interactive Shiny applications (scores,species, …) — proxied to therstudiocontainer’s Shiny Server on internal port 3838

Shiny Server docsfile

static file server for PMTiles archives, report downloads, reference GeoPackages — served by Caddy’sfile_serverdirective directly from the/sharebind-mount. This replaces the oldpg_tileservvector-tile path for Program Areas, Ecoregions, Planning Areas, protractions, blocks and aliquots; each layer is a single.pmtilesfile that the browser range-reads for only the tiles it needs.titiler + titilecache 🔒🟢

custom msens TiTiler factory fronted by a Varnish cache.

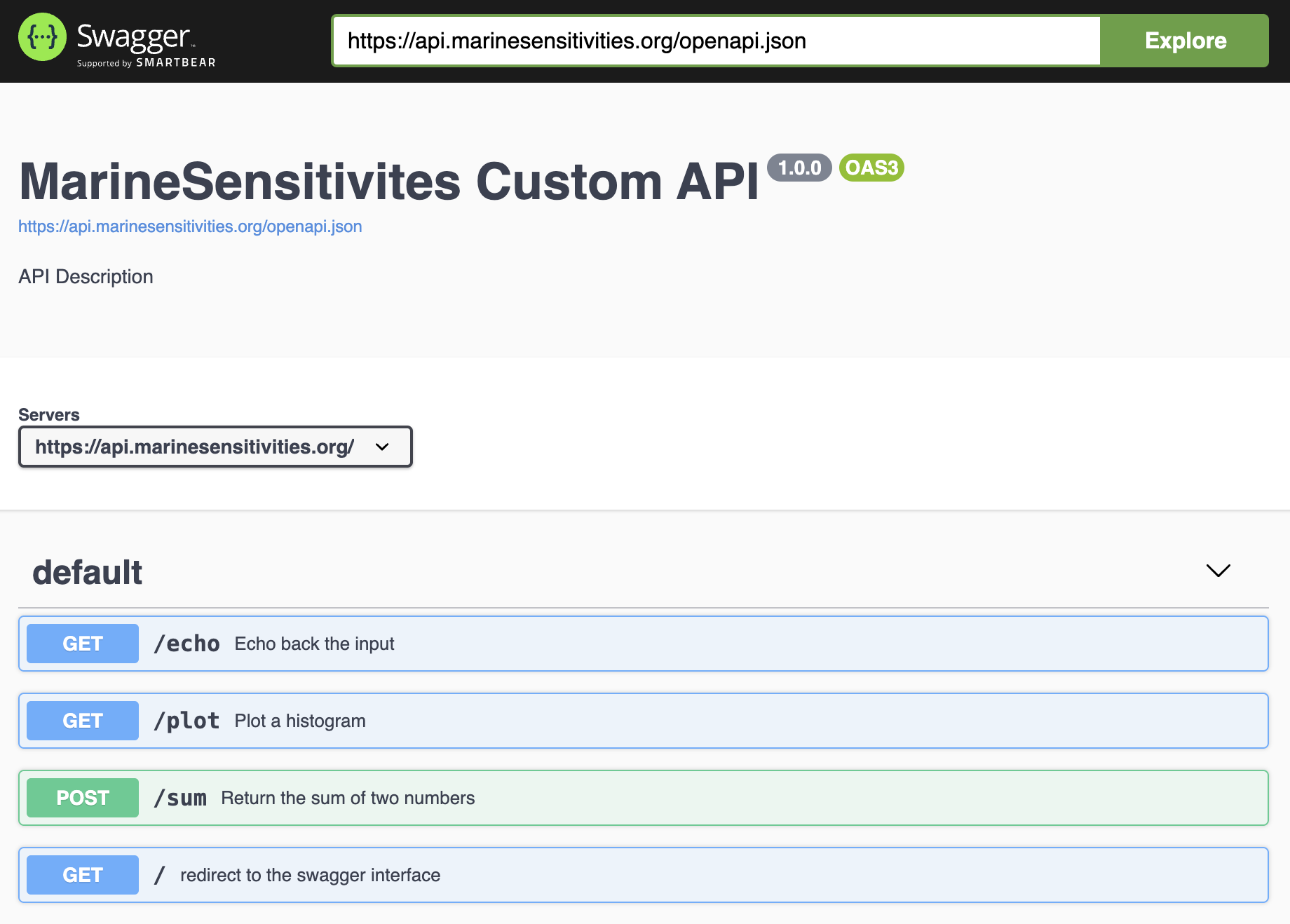

The factory exposes/msens/*routes that execute a validated SELECT against DuckDB, look the result up against a pre-baked cell-id COG, and return on-the-fly colorized PNG tiles (see Chapter 14).titilecachekeys on the full URL (including base64 SQL) and caches each tile for 7 days withdefault_keep=604800; the custom VCL (server/varnish/titiler.vcl) normalizes query-param order viastd.querysort()so semantically-identical URLs share a cache entry, strips cookies / authorization (the factory is stateless), and adds anX-Cache: HIT | MISSdebug header.api

custom RplumberAPI for programmatic access to DuckDB — see Chapter 14

11.5.2 Admin

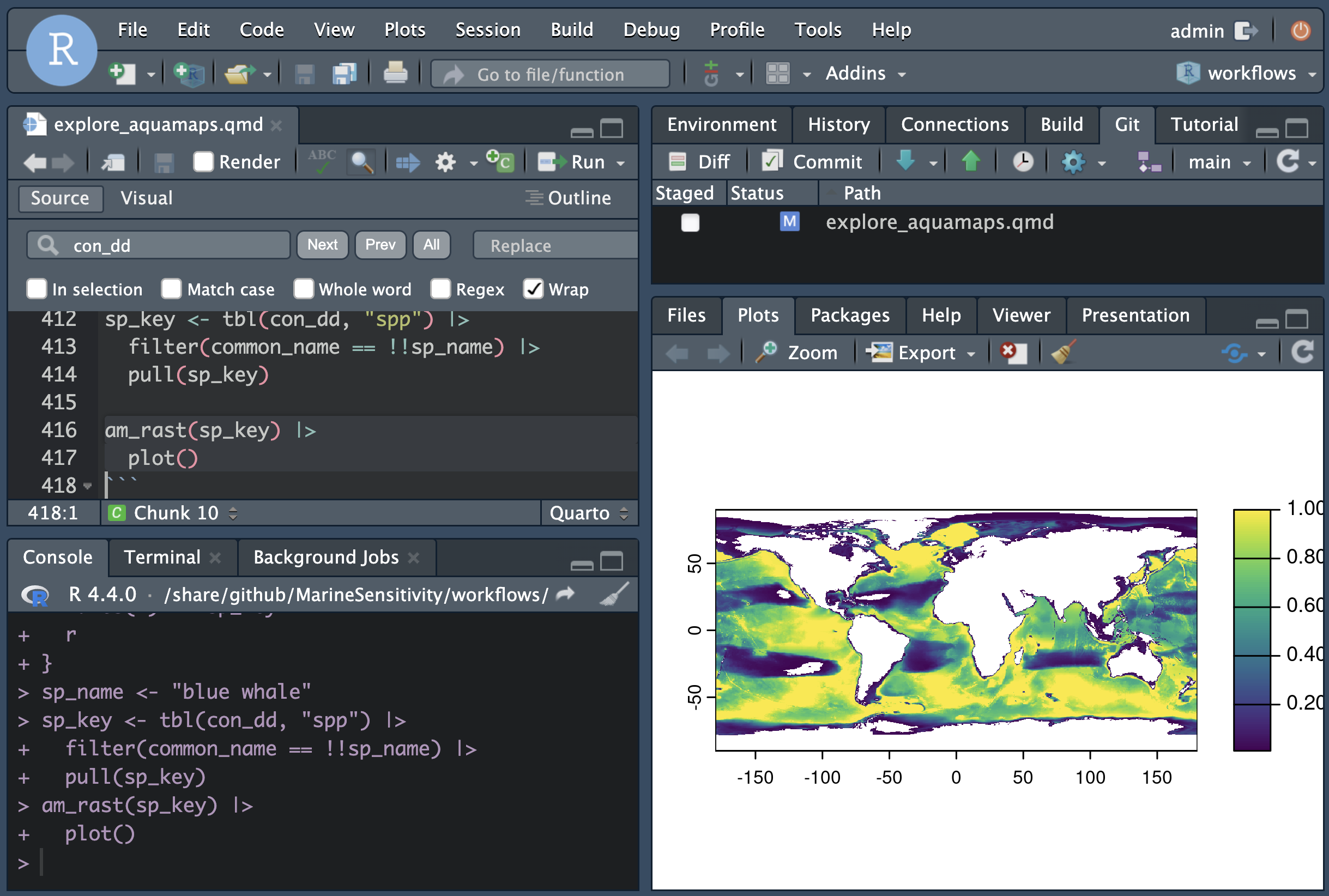

rstudio 🔒

integrated development environment (IDE) to code and debug directly on the server.

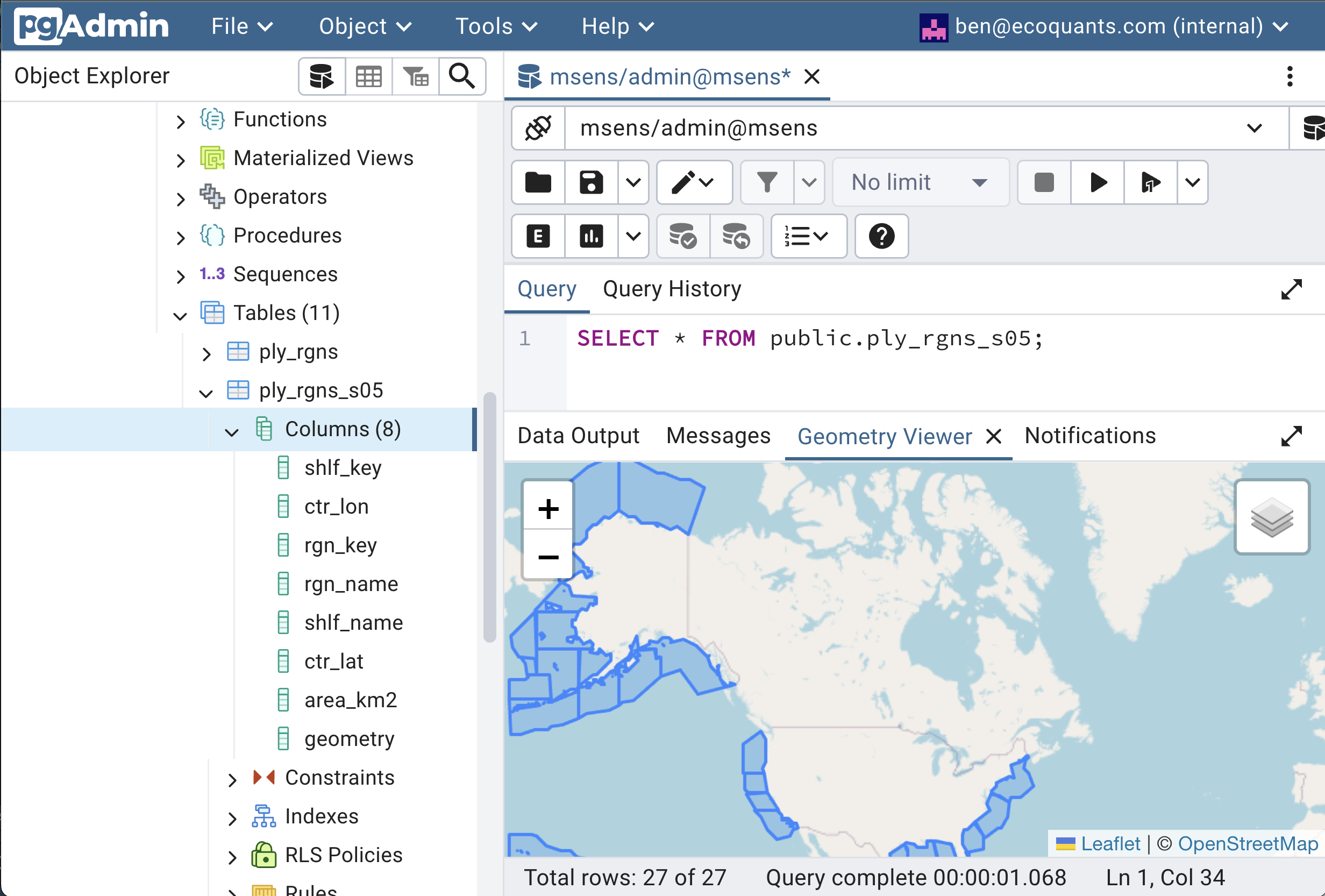

Posit RStudio Serverpgadmin 🔒

PostgreSQL database administration interface — used for legacy apps that still connect to PostgreSQL (see below).

pgAdmin

11.5.3 Legacy (carried for backward-compat; not in the critical path)

As of 2026-04, the new raster tile path (titiler + titilecache) and PMTiles-via-file replace what was previously handled by PostgreSQL + pg_tileserv. The authoritative store is now DuckDB (/share/data/big/latest/sdm.duckdb). The following services remain in docker-compose.yml only for backward compatibility with a small set of pre-2026 apps (indicators, bird_hotspots) that still read from PostgreSQL. New app code should target DuckDB + the TiTiler factory + PMTiles.

- postgis (

postgis/postgis:latest, internal 5432)

PostgreSQL + PostGIS — formerly the primary spatial database; now read by legacy apps only. - tile (

pramsey/pg_tileserv:latest, internal 7800) + tilecache (Varnish, 6081)

vector tiles from PostGIS tables — superseded by static PMTiles served by Caddy. - rest and swagger — PostgREST + Swagger UI, both commented out in

docker-compose.yml.